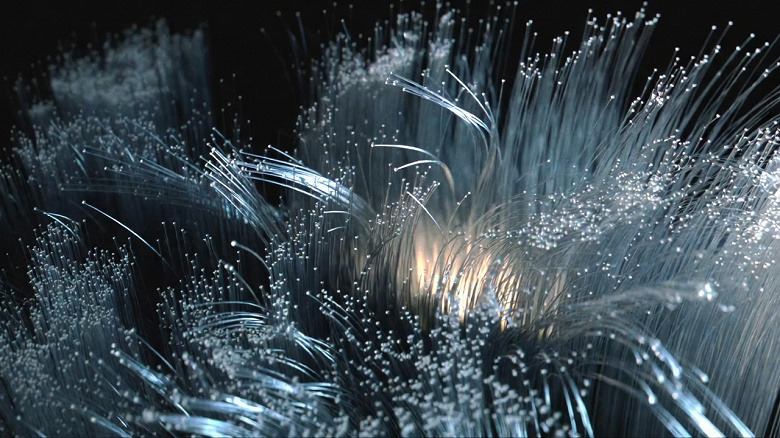

Legendary Doom and Quake developer John Carmack has proposed a novel concept for storing and accessing information for AI training, sparking a dense technical conversation among technologists. In a post on the social network X, he outlined using an extended fiber optic line as a form of Level 2 cache to hold AI model weights, aiming for near-zero latency and immense bandwidth.

A Modern Take on Ancient Memory

Carmack’s concept involves using a fiber optic loop as a cache to continuously feed AI chips with a stream of data. He noted that data transmission speeds of 256 Tbit/s over a distance of 200 km (124 miles) through single-mode fiber have already been demonstrated. This configuration means that approximately 32 GB of data is “in flight” or stored within the fiber at any given moment, providing a staggering 32 TB/s of bandwidth.

Because the patterns for accessing weights during the inference and training of neural networks can be deterministic, Carmack finds it “amusing to consider a system with no DRAM, and weights continuously streamed into an L2 cache by a recycling fiber loop.” This idea is a modern-day equivalent of ancient mercury delay-line memory, which stored data as acoustic pulses traveling through tubes of mercury in early computers like the UNIVAC I. In this historical method, data was kept circulating in a loop until needed, a principle Carmack’s idea revives with light instead of sound.

The Trade-Offs: Power, Cost, and Practicality

One of the most significant potential benefits of this approach is power efficiency. Conventional DRAM requires a constant supply of electricity to refresh its memory cells to retain data, consuming substantial energy in large-scale AI servers. In contrast, maintaining an optical signal in a fiber loop requires significantly less power. As AI’s energy demands continue to soar, with a single query to a large model like ChatGPT consuming nearly ten times the electricity of a traditional Google search, such efficiencies are becoming critical.

However, the concept faces practical hurdles. Carmack acknowledges that implementing this for modern trillion-parameter models would require numerous such devices. Furthermore, the high cost of extensive fiber optic lines, along with the necessary optical amplifiers and digital signal processors to maintain signal integrity, could offset the power savings.

A Glimpse into the Future of AI Hardware

While a full-scale fiber loop memory system may not be immediately feasible, the discussion highlights a critical bottleneck in AI development: memory bandwidth is often a more significant constraint than raw computing power. Carmack suggests that fiber optic transmission may have a better growth trajectory than DRAM, which is approaching its physical limits for miniaturization.

A more practical, near-term application of this thinking involves combining multiple inexpensive flash memory units to achieve the required read bandwidth. This would depend on flash memory and AI accelerator manufacturers agreeing on a standardized high-speed interface, a prospect that seems increasingly likely given the massive investment in the AI sector. Carmack’s thought experiment, while futuristic, successfully redirects focus toward innovative optical and hybrid solutions to overcome the memory wall and power the next generation of artificial intelligence.